The

TBM Chip:

The

TBM Chip:

The

Next Step in Particle Detection

By Alexander Armstrong III

Supervisor: Dr. Andrew Ivanov

Kansas State University Physics Department REU Program

Welcome to my webpage. This page summarizes my work with the REU at KSU working within the HEP group to test the TBM chip which manages ROCs in the CMS detector at the LHC for CERN. Don’t mind if that made little sense. I include the acronyms and initialisms to poke fun at what I found to be a humorous obsession with such things in academia that make it particularly difficult for undergraduate researchers to get up to speed. Hopefully, this website gives anyone reading a good sense of what I did at Kansas State this summer while being engaging and understandable.

7. Test Results

8. About Me

My project is part of the larger

human endeavor to understand the most basic stuff of which our world is made.

By understanding what our world is made of we are in a more privileged position

to make sense of why things do what they do. Developing technology hinges on

both an improved understanding of the things we need to build as well as how we

plan to build them. Research in particle physics has led to improvements in a

variety of fields of society such as medicine, national security, and computing.

Two quick examples can illustrate this. First, radioactive materials are radioactive

because they release lots of particles at high speeds that can be damaging to

human tissue. Well, improvements in particle detectors have given us improved nuclear

waste monitoring systems to better ensure the safety of nuclear power plants.

Second is the one that everyone is familiar with, namely, the internet. Yep.

The World Wide Web was developed by particle physicists looking for a way to

share data quickly and effectively. So particle physics benefits technology

because making advancements in particle physics requires making technological

jumps that benefit everybody. For an excellent 1-page glance at the variety of

ways research into particle physics has benefitted society follow this

link to a pdf file put out by Fermi National Accelerator Laboratory.

Beyond the technological applications,

understanding the physical world can itself be a valuable thing that prompts

greater philosophical reflection on who we are and how everything got here in

the first place. My main interest in particle physics comes from its

fascinating ability to get us to think about the world in which we live. We may

not be just a big heap of particles but we surely got a lot of them in us and

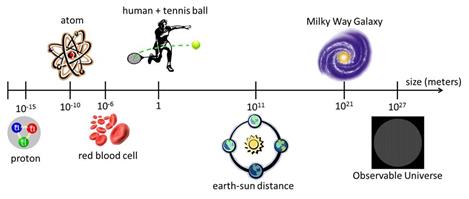

that fact is fascinating. There are likely to be more fundamental particles in your

body than there are stars in the entire visible universe! The universe is so

big and yet it contains things that are so tiny which make up every physical

thing. Therefore, understanding what kind of world we live is greatly

benefitted by studies into the fundamental particles that make the physical

world. My project is part of the larger efforts to improve the tools we have to

understand the smallest things in the world. By testing new hardware to make

sure it works as expected, we ensure that detectors in the upcoming years will

continue to push technological limits and human understanding.

So who am I? Why was I chosen to take part in a physics research experience for undergraduates (REU)? Well my short answer would be that I am a undergraduate physics major attending Wheaton College in Wheaton, Illinois with a strong interest in doing research in the fundamental disciplines of science. What do I mean by fundamental? I mean any discipline that attempts to understand the basic constituents of the world, the basic interactions of those constituents, or the larger questions of how everything got here and how the universe as a whole is evolving. Most of these questions are investigated by the fields of particle physics and cosmology. With these being strong interests of mine for scientific, philosophical, and existential reasons, I jumped on the opportunity to work at Kansas State University (KSU) and get involved with the community of high energy physics (HEP).

If you want to know about me apart from just this research, then check out the “About Me” section below. For a more concise statement of what I have done throughout college, check out my CV.

Here is quick summary of the accomplishment of my research experience with Dr. Andrew Ivanov. I, alongside another undergraduate, Wyatt Behn, participated in the testing of the updated Token Bit Manager (TBM) chips as well several side projects analyzing simulated data of top quark scattering events. The data analysis projects provided great experience to work with the data and analysis frameworks that particle physicists actually use to make testable predictions about what we might see in particle detectors. In addition, the TBM project represents a central part of the upgrades to the Compact Muon Solenoid (CMS) detector at the Large Hadron Collider (LHC) in Switzerland projected to take place in 2017. These upgrades will dramatically improve the capabilities of the CMS to probe the fundamental structures of the physical world giving us all a chance to benefit from the things learned. Below is an outline of my project:

TBM Project: Test the functionality of TBM 08b and 09 chips to determine their peak overclocking speed as well as operating voltages

1. Learn TBM function in CMS pixel module

2. Learn testing code procedures

3. Train to operate testing equipment (Cascade probe station)

4. Run the tests on individual chips as well as wafers

Top Quark Project: Analyze simulated data of various top-quark production channels to find discriminating variables that support existence of the top quark

1. Learn ROOT data analysis framework

2. Write ROOT macro that compares signal and background data for any user-defined event

3. Analyze given data to find discriminating variables

For friends and family visiting this

page as well as anyone else who may not be familiar with the all the background

information needed to make heads or tails of what I am doing, this section is

for you! I do not intend for the information on this web page to be

unapproachable to anyone interested in learning about what I did. So hopefully

I can successively get readers of a wide variety up to speed on anything they

need in order to grasp what I did and its significance.

Ever since

the ancient Greek atomists reasoned that the world must be made up of tiny

indivisible “atoms”, many people, including scientists today, are interested in

seeing how much everything we see really can be broken down into a

comprehensible set of basic building blocks. Many people are familiar with the

table of elements and the atoms that constitute the elements. Chemistry focuses

a lot on understanding the properties of these elements and how they come

together to form the variety of complex things we see around us. Many people

also might know that atoms are made up of different particles called protons,

neutrons, and electrons.

However, we

have known these things for decades now and since then physicists have been

revealing that the world can be broken down even more.

Protons and neutrons, which make up the nucleus of the atom are themselves made

up of what are called “quarks.” There are six different kinds of quarks and

these come together in different combinations to make much of what is studied

in particle physics. To make a long story short, research like this has

provided us with what is today referred to as the Standard Model of Particle

Physics, which is like a periodic table for physicists. It lists out what we

currently understand to be the basic building blocks of everything in the

universe. Just as the periodic table is arranged according to the properties of

the elements, the standard model organizes particles according to their

properties. Understanding these distinctions is not crucial to grasping the

main point that this is how physicists currently look at the world. Unfortunately, this isn’t the full story

and we know it.

The standard model organizes extremely

well much of what we see in the world. Anything you have looked at is probably

built from these particles. However, the standard model does not encapsulate

everything. Words like ‘dark matter’ and

‘dark energy’ represent two big categories of stuff in the world that do not

fit well into the standard model. Similarly the mathematics that goes with the

standard model does not all fit together like we want it to unless we imagine

that there are even more particles beyond the standard model, different

particle models altogether, or something completely different. Therefore,

physicists are actively trying to find ways to make sense of all the many

things scientists observe in the universe such that we can improve the Standard

Model to better describe the building blocks of the world.

Understanding

the smallest things in the universe requires more and more powerful “microscopes”.

I generalize microscopes to mean anything that allows us to look at or study

things too small to see with the naked eye. This is important because after a

certain point we cannot use magnifying lenses to look at small things. Light

starts to reflect off things like molecules, atoms, and fundamental particles

such that it will not produce an image like the one we get when looking at skin

cells under a microscope. However, we do have ways of studying really small

things like particles even if we cannot look at them directly. In the case of fundamental

particles, we have learned that smashing things together works pretty well. If

you have heard the term ‘particle collider’ before than this is what was being

talked about. One important microscope for particle physicists is a particle

collider.

How does

colliding particles help us understand them better? Here is an analogy I have

found to be very helpful. Imagine we want to know what parts are inside a car.

Assuming we are not allowed to touch the car or open up the hood, one

conceivable way would be to take the car and smash it into something such that

parts start falling off for us to look at. If we smash the car into something

with enough speed than we might even see parts of the engine come flying out. This

is in many ways mirrors how we study particles with particle colliders with one

big exception that I will get to in a bit. Anyway, we cannot hold a particle

(e.g. proton) in our hand and pull it apart and look inside it. However,

smashing particles into each other can cause things to come flying out that we

have never seen before. This is how we learned that protons and neutrons are

actually made of quarks. Moreover, just as a faster car leads to a more

energetic collision with more debris, more energetic particle

collisions leads to more particle debris to study. This is why you will

hear particle physicists focus on how energetic collisions are in a collider.

Right now the Large Hadron Collider in Europe is the most powerful particle

collider in the world.

I mentioned

that there was one big exception. The exception is that unlike cars that travel

around with all their parts inside them, particle collisions can release

particles that did not exist prior to the collision. Strange,

right? But we do in fact observe this. We collide

two protons together and see lots of electrons comes flying out. However, we do

not believe that electrons are secretly hiding in the proton because the

electron has properties that the electron doesn’t have. It’s like seeing an electric

engine in the wreckage of two diesel trucks. The strange reason that particles

are allowed to appear in the explosion of the particle collision is because of

Einstein’s famous equation E=mc2. It’s strange but actually not that

complicated to wrap one’s head around. Energy and mass are interchangeable

substances in the world. It is difficult to draw a really good analogy but it

can be helpful to think of it like phases of water where water can be sitting

in a cup as a liquid but then evaporate into a gas. The liquid and gas are

interchangeable substances. Now physicists might cringe at that analogy but I

think it helps to grasp the central idea that while we may observe two things separately

such as a liquid and a gas, it is possible for us to gain more of one by giving

up some of the other.

So matter

and energy can be interchanged such that in particle collisions matter is

converted into energy and then converted back into some different form of

matter. The reason this is probably difficult for most people is that energy is

a very confusing and vague idea. Gas and liquid make sense to us as well as

matter in general. While energy may be a word we use all the time it is

difficult to really understand what it is. Maybe some of us imagine laser beams

or energy blasts coming out of a ray gun. There is energy in these situations

but that is not all that energy is. Energy can also be a related to motion such

that an object has more energy as it moves faster. Getting back to particle

collisions then, a moving particle has a lot of motion energy (i.e. kinetic

energy). That is what particle colliders try to give to particles just like we

wanted to give lots of motion energy to colliding cars. When the particles

collide and suddenly come to a stop, all that kinetic energy gets brought

together. The colliding particles explode in a burst of energy and then all that

energy starts to convert back into matter in the form of other particles. Phew!

If you can grasp this concept then the rest of this website should be much

simpler.

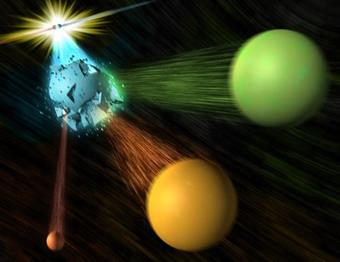

If you are

still following at this point, then there is one other caveat I want to throw

out. Unlike car parts that once ejected will probably remain as one piece as

they fly through the air and land on the ground, particles can spontaneously

break apart. As mentioned above, the particle formed in the high energy

collisions are usually very heavy (i.e. massive). Particles like this tend to

be extremely unstable and want to break down into less massive particles. I

always imagine really overblown balloons that are on the verge of popping. The

more massive a particle is, the quicker it tends to break down into lighter or

more stable particles. This break down is called particle decay and physicists

attempt to keep large tables of how long particle live before they tend to

decay. Really stable particles like electrons or protons take so long to decay

that we usually just they never decay. Why is all this important? Well when I

talk about how we actually detect these particles, it becomes important to note

that the particles we are interested in usually decay long before they are ever

registered in some detector device. Instead they form and decay almost

immediately and we only measure the particles they decay into. Why does this

not prevent us from ever knowing anything about these particles? Well these

particles happen to be nice enough to decay in very particular ways such that

the particle coming out of the decay can be treated as a fingerprint that the

particle was created. Just as we might have never seen the suspect at the crime

scene, we can use the fingerprint to identify that they were there.

To summarize this section then,

particle physics is concerned with understanding the basic building blocks of

the world and noticing any patterns in their properties and behaviors. We hope

to ultimately possess a model that describes all of the most fundamental

particles but to do this we have a lot more of the world to explain. One major

way particle physicists investigate smaller particles is through particle

colliders. By smashing particles together, we are able to learn a lot about the

kinds of particles that exist in the world. Particles that collide with more

energy are more likely generate new and interesting particles similar to the

case with crashing cars. All this goes towards helping us understand the

particles we observe here on earth as well as the things we observe out in

space.

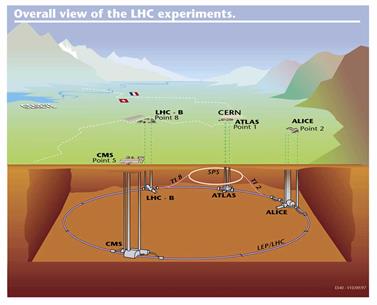

How do we

actually detect particles released in these collisions? As I said earlier, we

cannot just look at particles. So we smash particles together and send a bunch

of different particles scattering out in all directions but how do we see those

particles. While this question can get into the specifics of detector electronics,

I think there is a much easier way to understand particle detection that is

helpful for making sense of what I worked on this summer. My work was for a

specific detector called the Compact Muon Solenoid (CMS) which is part of the

Large Hadron Collider (LHC), the world’s most powerful particle collider. If the

names done make sense that’s fine. I’ll be more descriptive later. All of this

is owned and operated by the Conseil Européen pour la Recherche Nucléaire (CERN) which

translates to the European Council of Nuclear Research. CERN has been around

since the 50s and has been overseeing many physics projects investigating the

fundamental building blocks of our world. In 2008, they completed construction

of the LHC, which to this day is still the largest and most powerful collider

in the world. The LHC is a massive underground ring, 17 miles around, that

accelerates two streams of protons around in opposite directions. The use of protons

is why the collider is called a “hadron collider.” Hadron is a type of particle

just like SUV is a type of vehicle. So the Large Hadron Collider could also be

called the really big proton collider. Anyway, the streams of the LHC are

brought together at 4 separate collision/interaction points. At each of these

collision points is built a detector designed to measure particular features of

the particles that come out. One of these detectors is the CMS, which a

general-purpose particle detector purposed with helping us understand a wide

array of particle physics questions.

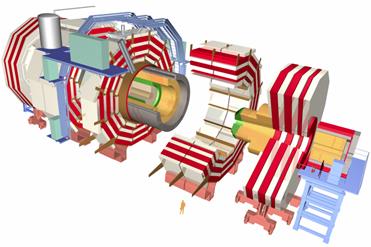

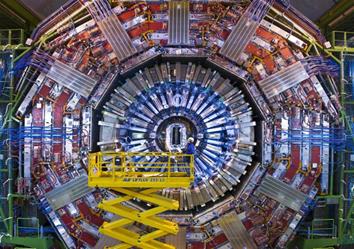

As pointed out above, CMS stands for

Compact Muon Solenoid. Breaking this name down it is actually not saying much

that is complicated apart from the word ‘Solenoid.’ Compact implies exactly

what you think it means. The detector is extremely compact with 12,500 metric

tons of technology crammed into a tube that is 22 meters long with a 15 meter

diameter. Muon indicates one of the many particles that gets released during

collisions that CMS is specially designed to detect. Solenoid is just a type of

magnet that gets used to determine more information about the particles released

in the collision. Essentially, CMS is a shorter way of saying “really tightly

packed particle detector that uses a high-tech magnet and is specialized for

detecting muon particles.

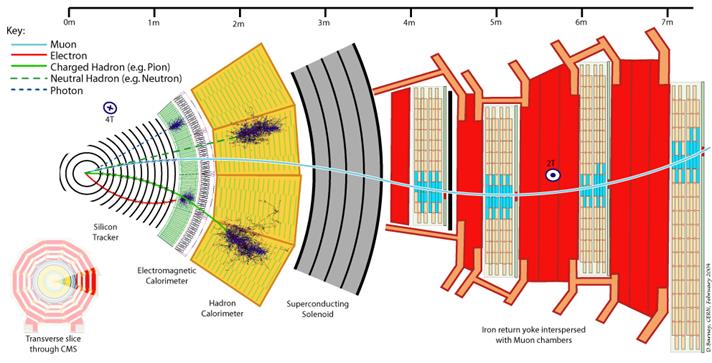

The actual

detector is like a big onion where different layers measure different things from

the collision that all gets put together to figure out what happened during the

collision. It is possible to go into a lot of detail but there is actually not

much one needs to understand to make sense of where my research fits in. The

detector consists of 5 groupings of layers (see image below). The first grouping

of layers (silicon tracker) is made up tracks the position of most particles as

the go flying through, the second and third grouping of layers (calorimeters)

measure how much mass and motion energy the certain particles have, the fourth grouping

of layers (superconducting solenoid) is the big magnet, and the fifth grouping of

layers (muon chambers) detects the position and energy of muon particles.

That’s everything in a nutshell.

If you can

grasp that then you can move on. However, you might still have at least two

questions. First, what is the purpose of the big magnet (i.e. superconducting

solenoid)? The answer is that we need the magnet to determine the energy of

particles. When particles move through the detector with a particular energy of

motion and mass, the magnet bends the movement of particles in a particular

ways. What one needs to take away from this is that high energy means less curve and lower energy means more curve. No curve

indicates the particle is not affected by the magnetic field at all (i.e.

uncharged). For the two layers tracking the positions of particles, we measure

the curve of the trajectory and then can calculate the particles energy of

motion and mass. Cool right!

The second

question you might have is this: how do the particles just pass through the

detector if it is so compact? There are a few parts to answering this. The

first answer to this is that while it may be compact to us, it is not that

compact to high energy particles. A block of iron can seem heavy and dense but

in fact there is a lot of space for particles to fly through it unharmed. Sure

you shouldn’t expect your hand, a bullet, or a liquid to go right through a block

of iron, but particles like the kind that come out of the detector are even

smaller and can therefore often pass through. The atoms that make up that block

of iron consist of electrons orbiting around the protons and neutrons in the

center. For comparison, the sizes involved would be like a soccer ball resting

in the middle of a stadium while the electrons orbit at the edge of the

stadium. Therefore particles flying through materials are usually able to go

clear through much of the detector. The second part of the answer is that the

particles have high energies. Even if they do come close to particles in the

detector they usually can move through so fast and with so much “oomph” that

there movement is not greatly disturbed. The final part of the answer is that

certain materials interact with particles more strongly than others. The

detectors are designed to stop the particles flying out from the collision only

when we want it to. Therefore, we build the detector out of material that will

react minimally with the particles when we only want to measure their position

but will react strongly when we want to stop them in their tracks and measure

the total energy they had.

So in

summary, the CMS is one detector on the LHC. It uses a variety of compact

layers of detectors to determine the trajectory and energy of particles.

Knowing the energy and position of particles, physicists are able to determine

what kinds particles were measured in the detector. As pointed out before, we

can frequently reason back from the particles we observe in the detector to the

particles that decayed long before they ever reached any part of the detector.

With the ability to figure out what happened in a collision we can look for

things that do not make sense to us. If we see particles appearing where we do

not expect them to or having energies we do not expect them to have, then we

have something to research. More often though, we have predications about what

we expect to see and then we can test those predictions in these detectors to

see if we see what someone predicts.

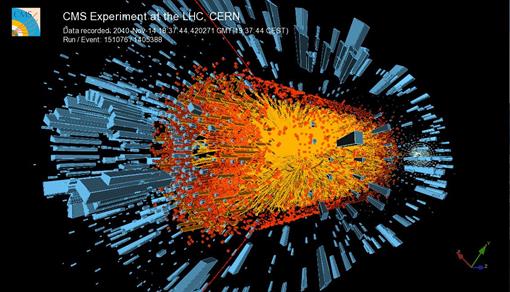

Now I get

to introduce where my research fits in to all this. It helps to start by

pointing out that the data taken by the CMS is not just a single novel’s worth

of information on position and energy of particles such that one person could

look over it in a couple days and have looked at most everything there is to

see. The data is actually so large that it takes thousands of people years to

look through it all and analyze it in every which way possible to see if we

might have missed anything hidden between the lines. Dealing with this

information overload is a great segue into my research.

When the

LHC is up and running, it does not produce one big

collision. Instead, it produces 40 million collisions every second. Thinking

back to the car crash analogy, that is not only a lot of cars but a lot more

car parts. The figure places the amount of information produced every second

around 40 terabytes. That is about twice the amount of information in the

library of congress every second! We cannot afford to store that information

never mind analyze it. Therefore, it is crucial that we find a way to limit the

amount of data output by the detector. The solution comes from the fact that

most of the data recovered in these collisions only tells us boring stuff we

already know about particles. We want to look at the exciting new stuff that

happens at high energies. Therefore, particle detectors for a while now have

been using what’s called a Trigger system to cut down the information saved

from the collision. The Trigger system skims over the information recovered

from the collision and gets triggered when anything interesting comes through.

It then sends a trigger signal down to the first layer of the detector to the

electronics holding on to the collision information to tell them which

information to accept and then to dump the rest. The information that passes

this test is then sent to a bank of computers that further analyze the larger

sets of data to see if anything could be potentially interesting about the

collision. If the particular collision event seems interesting then it all gets

passed on through to be stored and analyzed in much greater detail by people

all over the world!

So that is the overview of the trigger

system but where does the TBM come in? Well, the TBM is the chip that manages

the trigger signal once it gets down to the inner grouping of layers in the

detector where position information is stored. It is called the silicon tracker

in the image above There is not one TBM chip though.

There are actually thousands. The inner grouping of layers in the detector is

subdivided a bunch of times to make it easier to manage all the data. One can

think of the inner layers of the detector like a company. All the data is

coming in and millions of ground level employees are recording the data as it

comes streaming through. It would be too much for the CEO to oversee everything

and so the employees are grouped into sections and branches that are managed by

different levels of supervisors and managers. Messages from the CEO are passed

down through the chain of command to tell the employees what needs to be done.

In this same way, the detector has millions of pixels that act like the

employees, recording the position of particles as they pass through. Many

pixels are grouped under a Read-Out Chip (ROC) that receives and stores all

information recorded by the pixels. Several ROCs are grouped into a pixel

module that is managed by none other than the TBM. So there you go. The TBM is

basically the manager of the module which has several ROCs to supervise the

pixel employees. As mentioned before, the TBM receives signals from higher-ups

about whether to accept or dump information being held by the ROCs and passes

that information along to the ROCs who then do as told. Accepted information is

than given to the TBM to be packaged and sent on over to company headquarters

for analysis.

With

everything above as background, I can

now restate the purpose of my project with the TBM. CMS is going to upgrade

its detectors in 2017 to handle more collisions per second at higher energies.

One of these upgrades is a new TBM chip to manage each pixel module that is

better at managing ROCs, can process more trigger signals, and can last longer

in the detector before needing to be replaced. KSU was tasked by the scientists

working at CMS to test thousands of these chips to guarantee they can do what

we need them to do. Carrying out these tests then became a large portion of my

time here at KSU. Now you can try to go back to the first paragraph and see if

you understand my first sentence with the unnecessary amount of acronyms and

initialisms.

Research

Description:

Following the outline given in the project overview, here is a more detailed account of the research undertaken this summer. While this is not necessary to read for those interested in only grasping the bigger picture of my research, it provides specific technical details that highlight what I had to learn, how I learned it, and how it applied to getting the final results.

TBM Project

1. Learn TBM function in pixel module - All TBMs used in CMS experiments have been custom-built, mixed-mode, radiation-hard, integrated circuits that use a token passing channel access method to control the signal readout from ROCs in the inner silicon tracking system. Management takes place in several ways that are important to understand when making sense of the tests run on the chips to ensure proper functioning. To understand the chip, I looked over copies of the chip documentation put out by Rutgers University for the TBM 05A, 08B, and 09 versions. From these I was able to get a good understanding of the chips principle functions, namely, distributing level 1 triggers, marking all accepted event data with a header and trailer, and then communicating various other commands to the ROCs to deal with a variety of scenarios that result from collisions. The TBM itself does while communicating via at least 3 control signals: a 40MHz clock synchronized to the CMS beam crossing, a serial data line for configuration settings, and a serial encoded trigger signal to receive triggers for distribution to the ROCs. The TBM 05A also had a separate active low reset signal to reset control registers but this was integrated into the other signals for future TBM versions. In addition to less control signals, new TBM benefits include more digital procedures, more cores, and more radiation-hard. All these go into boosting performance speed, functionality, and endurance. This is needed as CMS is planning on adding a new inner layer that is closer to the collision point and therefore will be interacting with more radiation.

2. Learn testing code procedures – With the TBM setup specified above, tests must aim to show that the TBM can successfully receive and respond accordingly to signals sent down the serial data line as well as properly pass on triggers to the ROCs and then receive, tag, and send event data down to other electronics. Testing this is accomplished with the help of a testing board set up for these kinds of procedures. The testing code itself is written in C++ language. With prior experience in only MATLAB, I taught myself as much C++ as I could to be able to look over the testing program and see how exactly it runs a test. With the help of graduate students, textbooks, and online resources, I managed to gain a much better understanding of the codes overall structure. Unfortunately, the specifics of the code involved communication with the field-programmable gate array for which I was not able to take the time to understand. Therefore, while couldn’t understand specific commands, I did follow the flow of the program through various Boolean functions with aggregate data type arguments containing values for various command signals followed by if-statements to confirm expected outputs. Improvements to the testing code as well as the programming of the FPGA became crucial later on so while I was not able to help on these fronts, learning the basics of the testing procedure helped me judge later on when and when not to assume problems in the code to be causing problematic testing results.

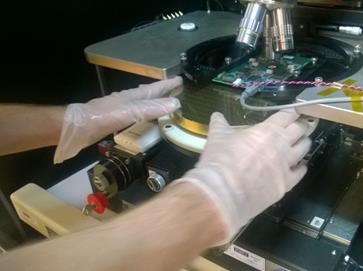

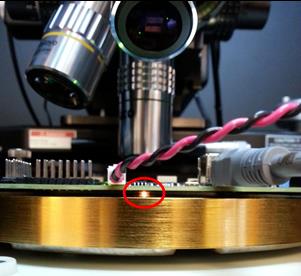

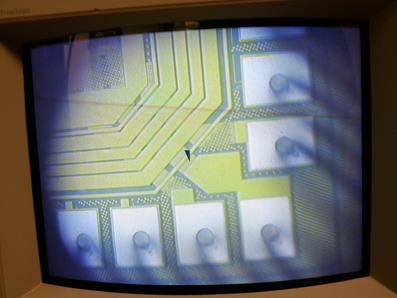

3. Train to operate testing equipment (Cascade probe station) – The tests on the chips were carried out on a Cascade probe station that requires an operator to place chips or wafers onto the stations moveable testing service (i.e. the chuck) and then by looking through a microscope manually align the chips contact pads with the probe tips on the testing board held in place above it. This process involved controlling the station not only from the computer but also using components on the station itself to refocus the microscope or realign any parts of the station if necessary. While initially setting up to run tests, it was noticed that the chuck and testing board probes were not level. I helped in figuring out that the chuck needed to be readjusted and that the chuck itself was not a perfectly flat surface but instead had a slight curvature to help create the vacuum seal with the wafer boards.

4. Run the tests on individual chips as well as wafers – see Testing Procedure below

Top Quark Project:

1. Learn ROOT data analysis framework – ROOT is the data analysis framework built on C++ that is designed to efficiently handle and analyze the large amounts of data that particle physics experiments usually produce. In order to get practice analyzing the kind of data that the TBM would be helping to gather, it was crucial that I learn how to work with root files, utilizing ROOT’s very powerful functions and classes to produce figures that highlight interesting features of the data. I gained most of my understanding of ROOT from the primer and various user guides that CERN has on the ROOT website. These alongside the material I used to learn C++ were very helpful in developing the skills to write macros for later parts of this project.

2. Write macro that compares signal and background data for any user-defined event – Using root files containing simulated data of scattering events for different top-quark production channels, I applied my knowledge of root to create a program that could produce histograms comparing the signal data from the top-quark decay and the background data of the different production channels. This program needed to do several things. The central part of it was a function into which I could enter any event data, say the transverse momentum of a jet or leptons, and specify which background data to compare it with, say pair production of a top and antitop quark. This function would then take that information as input and output an image of a histogram with both plots superimposed on each other to highlight how much the signal stands out from the background. This function is utilized in the program to run through a given set of data events and produce several such histogram plots and save all of them into a single directory.

3. Analyze given data to find discriminating variables – With a program to create comparative histograms, the next step involved actually looking through the types of data recorded in each event and figuring out what data most clearly demonstrates the existence of the top quark. This requires to important features. First, the signal must be significantly distinguished from the background such that if we were to look at actual data we would be able to recognize it. Second, the signal should show the top quark to have the theoretically predicted mass-energy of ~175GeV. With these parameters, I started out comparing the transverse momentum of different jets as well as leptons in the data and managed to find some usefully distinguishable events. After that, I got started on using different ROOT functions to find the invariant mass of the original top quark by reconstructing it from the invariant mass of the leptons, jets, and missing energy that we associate with neutrinos. Unfortunately, I was unable to finish this project.

Lab Setup: All chip and wafer testing (from here on just chip testing) was done in a clean room. Working in their required that we wear shoe covers. When handling wafers we had to wear gloves and a surgical mask. The room was thoroughly cleaned on several occasions.

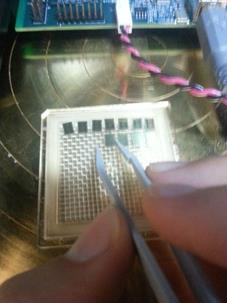

Step 1: The first step to testing a chip was centering it on the chuck as best as possible using hands or tweezers.

Step 2: Align chip under microscope and test board probes

Step 3: Use micrometer-precision movements of probe station to align probe tips to contact pads on the chip. The chuck on which the chip rests can be moved in any direction as well as rotate to line up the pads just right.

Step 4: One the pads were lined up it become important to calibrate the probe station so that it knew at exactly what height the surface of the chip was. After zeroing in on this height, it became possible to establish how far above the chip we chose to define “disengaged” and how far pressing down on the chip we wanted to call “engaged”. After deciding on a certain distance for engaged and disengaged, it became possible to run tests. This process was more complicated for wafers as we did not have to alighn just one chip but had to align the entire wafer, which is a grid of chips. The goals were the same though with the additional need to define how far apart chips were above and to the side of any given chip.

Step 5: Apply voltages across testing board and then run tests.

All chips tested passed minimum requirements with many passing above

that. All information gathered from the tests was saved and sent off with chips

to other labs where they will undergo radiation testing.

Final Presentation: Click here to download my presentation in PowerPoint and pdf formats.

Final Poster: Click here to see a pdf of my final poster

I will start by mentioning that I am not just a physics major. I am also a philosophy major. In fact, I entered college as a philosophy major thinking I would do nothing but philosophy and become a philosophy professor. What changed? Well, I realized that for me philosophy functions at its best when applied to helping understand and integrate what we learn from different disciplines. In general, philosophy is more about how you approach knowledge then what kind of knowledge you approach. Therefore, I became interested in seeing what other interests I had.

I have always been interested in physics because the most interesting questions in philosophy to me were the ones asking about the best methods for gaining knowledge, what we can know about the fundamental structures of the world, and what obligations we have because of the things we learn. Science, particularly physics, represents not only one of the culturally cherished ways of gaining knowledge but many physicists today stand in a phenomenal position to shed light on questions regarding the fundamental make up of our world and benefit the public with those findings.

So I picked up a physics major and decided to challenge myself to become an apt researcher that could someday contribute to these interesting questions, benefitting from a background in philosophical analysis and reflection. I should also mention that I did pick up a math major as well, given that it is the language of physics, but later decided I wanted to take classes on the language of Germans as well. So I dropped to a math minor alongside the physics and philosophy majors.

Beyond that, I am a California boy. I grew up in Orange County and then went to Illinois to learn what “real” winters are. I played varsity basketball and high school and continue to play the sport today by coaching a basketball team at a juvenile facility in St. Charles Illinois. I really like spicy food and take pride in my growing collection of hot sauces. I love learning and I love teaching and getting people excited to learn. Hopefully I have accomplished the latter parts here.

Image

Credits:

Periodic

Table: http://www.ptable.com/

Atom

Picture: http://chemistry.tutorcircle.com/inorganic-chemistry/atomic-structure.html

Greek

Elements: http://www.webwinds.com/myth/elemental.htm

Standard

Model: http://astrophysics.pro/tag/standard-model/

Microscope:

http://accu-scope.com/products/3075-stereo-microscope-series/

Size

Comparison Chart: http://osuwomeninphysics.wordpress.com/2013/03/13/smaller-than-microscopic-2/

Car

Collision: http://www.carinsurancecomparison.com/when-should-i-drop-my-collision-coverage-from-my-car

Colored

Beam Collision: http://iktp.tu-dresden.de/~cgumpert/Talks.php

Einstein

Photo: http://mentalfloss.com/article/49222/11-unserious-photos-albert-einstein

E=mc2

equation: “E=mc2-explication” by JTBarnabas

Particle

Decay: http://scitechdaily.com/babar-data-suggests-possible-flaws-in-the-standard-model/

LHC

schematic: hep://home.web.cern.ch/topics/large-‐hadron-‐collider

Interaction

Point: http://lhc-machine-outreach.web.cern.ch/lhc-machine-outreach/collisions.htm

CMS

schematic: hep://cms.web.cern.ch/

CMS

front-end picture: http://home.web.cern.ch/about/experiments/cms

CMS

cross section: hep://home.web.cern.ch/about/experiments/cms

CMS

collision 3D figure: hep://cms.web.cern.ch/news/cms-‐observes-‐hints-‐mel@ng-‐upsilon-‐par@cles-‐lead-‐nuclei-‐collisions

Pixel

Module: hep://arxiv.org/Hp/arxiv/papers/0707/0707.1026.pdf

This program is funded by the National Science Foundation through grant number PHY-1157044. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.